28 Utility functions

28.1 Selecting data by date

Selecting by date/time in R can be intimidating for new users—and time-consuming for all users. The selectByDate() function aims to make this easier by allowing users to select data based on the British way of expressing date i.e. d/m/y. This function should be very useful in circumstances where it is necessary to select only part of a data frame.

First load the packages we need.

## select all of 1999

data.1999 <- selectByDate(mydata, start = "1/1/1999", end = "31/12/1999")

head(data.1999)# A tibble: 6 × 10

date ws wd nox no2 o3 pm10 so2 co pm25

<dttm> <dbl> <int> <int> <int> <int> <int> <dbl> <dbl> <int>

1 1999-01-01 00:00:00 5.04 140 88 35 4 21 3.84 1.02 18

2 1999-01-01 01:00:00 4.08 160 132 41 3 17 5.24 2.7 11

3 1999-01-01 02:00:00 4.8 160 168 40 4 17 6.51 2.87 8

4 1999-01-01 03:00:00 4.92 150 85 36 3 15 4.18 1.62 10

5 1999-01-01 04:00:00 4.68 150 93 37 3 16 4.25 1.02 11

6 1999-01-01 05:00:00 3.96 160 74 29 5 14 3.88 0.725 NAtail(data.1999)# A tibble: 6 × 10

date ws wd nox no2 o3 pm10 so2 co pm25

<dttm> <dbl> <int> <int> <int> <int> <int> <dbl> <dbl> <int>

1 1999-12-31 18:00:00 4.68 190 226 39 NA 29 5.46 2.38 23

2 1999-12-31 19:00:00 3.96 180 202 37 NA 27 4.78 2.15 23

3 1999-12-31 20:00:00 3.36 190 246 44 NA 30 5.88 2.45 23

4 1999-12-31 21:00:00 3.72 220 231 35 NA 28 5.28 2.22 23

5 1999-12-31 22:00:00 4.08 200 217 41 NA 31 4.79 2.17 26

6 1999-12-31 23:00:00 3.24 200 181 37 NA 28 3.48 1.78 22## easier way

data.1999 <- selectByDate(mydata, year = 1999)

## more complex use: select weekdays between the hours of 7 am to 7 pm

sub.data <- selectByDate(mydata, day = "weekday", hour = 7:19)

## select weekends between the hours of 7 am to 7 pm in winter (Dec, Jan, Feb)

sub.data <- selectByDate(

mydata,

day = "weekend",

hour = 7:19,

month = c(12, 1, 2)

)The function can be used directly in other functions. For example, to make a polar plot using year 2000 data:

polarPlot(selectByDate(mydata, year = 2000), pollutant = "so2")28.2 Making intervals — cutData

The cutData() function is a utility function that is called by most other functions but is useful in its own right. Its main use is to partition data in many ways, many of which are built-in to openair

Note that all the date-based types e.g. month/year are derived from a column date. If a user already has a column with a name of one of the date-based types it will not be used.

For example, to cut data into seasons:

# A tibble: 6 × 11

date ws wd nox no2 o3 pm10 so2 co pm25

<dttm> <dbl> <int> <int> <int> <int> <int> <dbl> <dbl> <int>

1 1998-01-01 00:00:00 0.6 280 285 39 1 29 4.72 3.37 NA

2 1998-01-01 01:00:00 2.16 230 NA NA NA 37 NA NA NA

3 1998-01-01 02:00:00 2.76 190 NA NA 3 34 6.83 9.60 NA

4 1998-01-01 03:00:00 2.16 170 493 52 3 35 7.66 10.2 NA

5 1998-01-01 04:00:00 2.4 180 468 78 2 34 8.07 8.91 NA

6 1998-01-01 05:00:00 3 190 264 42 0 16 5.50 3.05 NA

# ℹ 1 more variable: season <ord>This adds a new column season that is split into four seasons. There is an option hemisphere that can be used to use southern hemisphere seasons when set as hemisphere = "southern".

The type can also be another column in a data frame. If this column is numeric, it is divided into n.levels quantiles (defaulting to four), which is to say bins of roughly equal numbers of PM10 concentrations.

# A tibble: 6 × 10

date ws wd nox no2 o3 pm10 so2 co pm25

<dttm> <dbl> <int> <int> <int> <int> <fct> <dbl> <dbl> <int>

1 1998-01-01 00:00:00 0.6 280 285 39 1 pm10 27 t… 4.72 3.37 NA

2 1998-01-01 01:00:00 2.16 230 NA NA NA pm10 36 t… NA NA NA

3 1998-01-01 02:00:00 2.76 190 NA NA 3 pm10 27 t… 6.83 9.60 NA

4 1998-01-01 03:00:00 2.16 170 493 52 3 pm10 27 t… 7.66 10.2 NA

5 1998-01-01 04:00:00 2.4 180 468 78 2 pm10 27 t… 8.07 8.91 NA

6 1998-01-01 05:00:00 3 190 264 42 0 pm10 1 to… 5.50 3.05 NAThis use of cutData() is quite destructive — the PM concentraitons are no longer in the data frame. You can use either the names or suffix arguments to get around this.

Rows: 63,371

Columns: 11

$ date <dttm> 1998-01-01 00:00:00, 1998-01-01 01:00:00, 1998-01-01 0…

$ ws <dbl> 0.60, 2.16, 2.76, 2.16, 2.40, 3.00, 3.00, 3.00, 3.36, 3…

$ wd <int> 280, 230, 190, 170, 180, 190, 140, 170, 170, 170, 180, …

$ nox <int> 285, NA, NA, 493, 468, 264, 171, 195, 137, 113, 100, 10…

$ no2 <int> 39, NA, NA, 52, 78, 42, 38, 51, 42, 39, 34, 38, 41, 42,…

$ o3 <int> 1, NA, 3, 3, 2, 0, 0, 0, 1, 2, 7, 8, 9, 8, 9, 9, 12, 14…

$ pm10 <int> 29, 37, 34, 35, 34, 16, 11, 12, 12, 12, 10, 11, 13, 17,…

$ so2 <dbl> 4.7225, NA, 6.8300, 7.6625, 8.0700, 5.5050, 4.2300, 3.8…

$ co <dbl> 3.3725, NA, 9.6025, 10.2175, 8.9125, 3.0525, 2.2650, 1.…

$ pm25 <int> NA, NA, NA, NA, NA, NA, NA, NA, NA, NA, NA, NA, NA, NA,…

$ pollution_bins <fct> pm10 22 to 31, pm10 31 to 44, pm10 31 to 44, pm10 31 to…Rows: 52,158

Columns: 13

$ date <dttm> 1998-01-01 00:00:00, 1998-01-01 02:00:00, 1998-01-01 03:00:…

$ ws <dbl> 0.60, 2.76, 2.16, 2.40, 3.00, 3.00, 3.00, 3.36, 3.96, 6.36, …

$ wd <int> 280, 190, 170, 180, 190, 140, 170, 170, 170, 180, 190, 180, …

$ nox <int> 285, NA, 493, 468, 264, 171, 195, 137, 113, 100, 109, 110, 1…

$ no2 <int> 39, NA, 52, 78, 42, 38, 51, 42, 39, 34, 38, 41, 42, 49, 32, …

$ o3 <int> 1, 3, 3, 2, 0, 0, 0, 1, 2, 7, 8, 9, 8, 9, 9, 12, 14, 16, 18,…

$ pm10 <int> 29, 34, 35, 34, 16, 11, 12, 12, 12, 10, 11, 13, 17, 20, 18, …

$ so2 <dbl> 4.7225, 6.8300, 7.6625, 8.0700, 5.5050, 4.2300, 3.8750, 3.34…

$ co <dbl> 3.3725, 9.6025, 10.2175, 8.9125, 3.0525, 2.2650, 1.9950, 1.4…

$ pm25 <int> NA, NA, NA, NA, NA, NA, NA, NA, NA, NA, NA, NA, NA, NA, NA, …

$ o3_cuts <fct> o3 0 to 2, o3 2 to 4, o3 2 to 4, o3 0 to 2, o3 0 to 2, o3 0 …

$ pm10_cuts <fct> pm10 22 to 31, pm10 31 to 44, pm10 31 to 44, pm10 31 to 44, …

$ so2_cuts <fct> so2 4 to 6.46, so2 6.46 to 63.2, so2 6.46 to 63.2, so2 6.46 …Most of the time users do not have to call cutData() directly because most functions have a type option that is used to call cutData() directly, e.g.,

polarPlot(mydata, pollutant = "so2", type = "season")However, it can be useful to call cutData() before supplying the data to a function in a few cases. Commonly, this is to have more control over how the data is conditioned - e.g., to use the hemisphere or n.levels arguments of cutData() directly. More details can be found in the cutData() help file.

28.3 Selecting run lengths of values above a threshold — pollution episodes

A seemingly easy thing to do that has relevance to air pollution episodes is to select run lengths of contiguous values of a pollutant above a certain threshold. For example, one might be interested in selecting O3 concentrations where there are at least 8 consecutive hours above 90~ppb. In other words, a selection that combines both a threshold and persistence. These periods can be very important from a health perspective and it can be useful to study the conditions under which they occur. But how do you select such periods easily? The selectRunning utility function has been written to do this. It could be useful for all sorts of situations e.g.

Selecting hours when primary pollutant concentrations are persistently high — and then applying other openair functions to analyse the data in more depth.

In the study of particle suspension or deposition etc. it might be useful to select hours when wind speeds remain high or rainfall persists for several hours to see how these conditions affect particle concentrations.

It could be useful in health impact studies to select blocks of data where pollutant concentrations remain above a certain threshold.

As an example we are going to consider O3 concentrations at a semi-rural site in south-west London (Teddington). The data can be downloaded as follows:

ted <- openair::importImperial(site = "td0", year = 2005:2009, meteo = TRUE)

## see how many rows there are

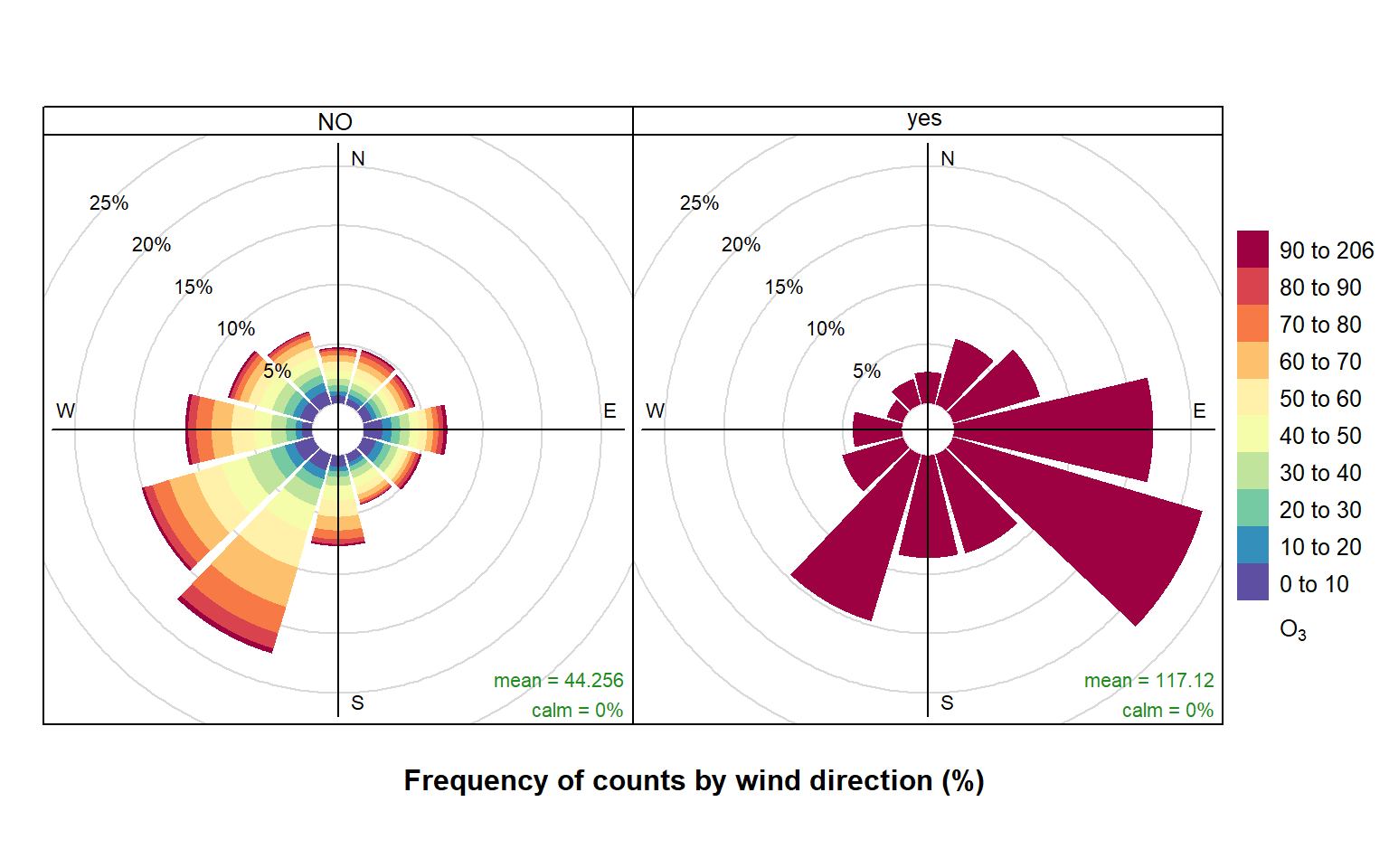

nrow(ted)[1] 43824We are going to contrast two pollution roses of O3 concentration. The first shows hours where the criterion is not met, and the second where it is met. The subset of hours is defined by O3 concentrations above 90 ppb for periods of at least 8-hours i.e. what might be considered as ozone episode conditions.

ted <- selectRunning(ted, pollutant = "o3", threshold = 90, run.len = 8)The selectRunning() function returns a new column that flags whether the condition is met or not. The user can control the text provided, which by default is “yes” and “no”, as well as the name of the column that is appended (defaulting to "criterion").

table(ted$criterion)

no yes

42425 1399 Now we are going to produce two pollution roses shown in Figure 28.1. Note, however that many other types of analysis could be carried out now the data have been partitioned.

pollutionRose(

ted,

pollutant = "o3",

type = "criterion"

)The results are shown in Figure 28.1. The pollution rose for the “no” criterion (left plot of Figure 28.1) shows that the highest O3 concentrations tend to occur for wind directions from the south-west, where there is a high proportion of measurements. By contrast, the when the criterion is met (right plot of Figure 28.1) is very different. In this case there is a clear set of conditions where these criteria are met i.e. lengths of at least 8-hours where the O3 concentration is at least 90 ppb. It is clear the highest concentrations are dominated by south-easterly conditions i.e. corresponding to easterly flow from continental Europe where there has been time to the O3 chemistry to take place.

The code below shows (as an example), that the summer of 2006 had a high proportion of conditions where the criterion was met.

timeProp(

ted,

pollutant = "o3",

proportion = "criterion",

avg.time = "month",

cols = "viridis"

)It is also useful to consider what controls the highest NOx concentrations at a central London roadside site. For example, the code below (not plotted) shows very strongly that the persistently highest NOx concentrations are dominated by south-westerly winds. As mentioned earlier, there are many other types of analysis that can be carried out now the data set identifies where the criterion is or is not met.

episode <- selectRunning(

mydata,

pollutant = "nox",

threshold = 500,

run.len = 5

)

pollutionRose(episode, pollutant = "nox", type = "criterion")Note that selectRunning() will fail if there are duplicate dates within the data frame. If multiple sites worth of data are contained within a single data frame, use type = "site" (or whatever column identifies different sites).

28.4 Aggregating data by different time intervals

Aggregating data by different averaging periods is a common and important task. There are many reasons for aggregating data in this way:

Data sets may have different averaging periods and there is a need to combine them. For example, the task of combining an hourly air quality data set with a 15-minute average meteorological data set. The need here would be to aggregate the 15-minute data to 1-hour before merging.

It is extremely useful to consider data with different averaging times straightforwardly. Plotting a very long time series of hourly or higher resolution data can hide the main features and it would be useful to apply a specific (but flexible) averaging period to the data for plotting.

Those who make measurements during field campaigns (particularly for academic research) may have many instruments with a range of different time resolutions. It can be useful to re-calculate time series with a common averaging period; or maybe help reduce noise.

It is useful to calculate statistics other than means when aggregating e.g. percentile values, maximums etc.

For statistical analysis there can be short-term autocorrelation present. Being able to choose a longer averaging period is sometimes a useful strategy for minimising autocorrelation.

In aggregating data in this way, there are a couple of other issues that can be useful to deal with at the same time. First, the calculation of proper vector-averaged wind direction is essential. Second, sometimes it is useful to set a minimum number of data points that must be present before the averaging is done. For example, in calculating monthly averages, it may be unwise to not account for data capture if some months only have a few valid points.

When a data capture threshold is set through data.thresh it is necessary for timeAverage() to know what the original time interval of the input time series is. The function will try and calculate this interval based on the most common time gap (and will print the assumed time gap to the screen). This works fine most of the time but there are occasions where it may not e.g. when very few data exist in a data frame. In this case the user can explicitly specify the interval through interval in the same format as avg.time e.g. interval = "month". It may also be useful to set start.date and end.date if the time series do not span the entire period of interest. For example, if a time series ended in October and annual means are required, setting end.date to the end of the year will ensure that the whole period is covered and that data.thresh is correctly calculated. The same also goes for a time series that starts later in the year where start.date should be set to the beginning of the year.

All these issues are (hopefully) dealt with by the timeAverage() function. The options are shown below, but as ever it is best to check the help that comes with the openair package.

To calculate daily means from hourly (or higher resolution) data:

daily <- timeAverage(mydata, avg.time = "day")

daily# A tibble: 2,731 × 10

date ws wd nox no2 o3 pm10 so2 co pm25

<dttm> <dbl> <dbl> <dbl> <dbl> <dbl> <dbl> <dbl> <dbl> <dbl>

1 1998-01-01 00:00:00 6.84 190. 154. 39.4 6.87 18.2 3.15 2.70 NA

2 1998-01-02 00:00:00 7.07 226. 132. 39.5 6.48 27.8 3.94 1.77 NA

3 1998-01-03 00:00:00 11.0 221. 120. 38.0 8.41 20.2 3.20 1.74 NA

4 1998-01-04 00:00:00 11.5 219. 105. 35.3 9.61 21.0 2.96 1.62 NA

5 1998-01-05 00:00:00 6.61 238. 175. 46.0 4.96 24.2 4.52 2.13 NA

6 1998-01-06 00:00:00 4.38 196. 214. 45.3 1.35 34.6 5.70 2.53 NA

7 1998-01-07 00:00:00 7.61 218. 193. 44.9 4.42 31.0 5.67 2.48 NA

8 1998-01-08 00:00:00 8.58 215. 161. 43.1 4.96 36 4.68 2.10 NA

9 1998-01-09 00:00:00 6.7 202. 163. 38 3.62 38.0 5.13 2.36 NA

10 1998-01-10 00:00:00 2.98 164. 219. 44.9 0.375 37.0 4.91 2.23 NA

# ℹ 2,721 more rowsMonthly 95th percentile values:

monthly <- timeAverage(

mydata,

avg.time = "month",

statistic = "percentile",

percentile = 95

)

monthly# A tibble: 90 × 10

date ws wd nox no2 o3 pm10 so2 co pm25

<dttm> <dbl> <dbl> <dbl> <dbl> <dbl> <dbl> <dbl> <dbl> <dbl>

1 1998-01-01 00:00:00 11.2 41.3 371 68.6 14 53 11.1 3.99 NA

2 1998-02-01 00:00:00 8.16 10.5 524. 92 7 68.9 17.5 5.63 NA

3 1998-03-01 00:00:00 10.6 37.9 417. 85 15 61 18.4 4.85 NA

4 1998-04-01 00:00:00 8.16 43.6 384 81.5 20 52 14.6 4.17 NA

5 1998-05-01 00:00:00 7.56 43.2 300 80 25 61 12.7 3.55 40

6 1998-06-01 00:00:00 8.47 42.7 377 74.2 15 53 12.2 4.28 33.9

7 1998-07-01 00:00:00 9.22 34.4 386. 80.0 NA 52.4 13.9 4.52 32

8 1998-08-01 00:00:00 7.92 43.7 337. 87.0 16 58.2 13.0 3.78 38

9 1998-09-01 00:00:00 6 46.3 334. 81.3 14 64 18.2 4.25 47

10 1998-10-01 00:00:00 12 37.9 439. 84 15.1 54 12.0 4.81 33

# ℹ 80 more rows2-week averages but only calculate if at least 75% of the data are available:

twoweek <- timeAverage(mydata, avg.time = "2 week", data.thresh = 75)

twoweek# A tibble: 196 × 10

date ws wd nox no2 o3 pm10 so2 co pm25

<dttm> <dbl> <dbl> <dbl> <dbl> <dbl> <dbl> <dbl> <dbl> <dbl>

1 1997-12-29 00:00:00 6.98 204. 167. 41.4 4.63 29.3 4.47 2.17 NA

2 1998-01-12 00:00:00 4.91 207. 173. 42.1 4.70 28.8 5.07 1.86 NA

3 1998-01-26 00:00:00 2.78 330. 233. 51.4 2.30 34.9 8.07 2.45 NA

4 1998-02-09 00:00:00 4.43 216. 276. 57.1 2.63 43.7 8.98 2.94 NA

5 1998-02-23 00:00:00 6.89 243. 248. 56.7 4.99 28.8 9.79 2.57 NA

6 1998-03-09 00:00:00 2.97 309. 160. 44.8 5.64 32.7 8.65 1.62 NA

7 1998-03-23 00:00:00 4.87 185. 224. 53.6 5.52 35.9 10.2 2.34 NA

8 1998-04-06 00:00:00 3.24 306. 144. 43.4 10.1 23.8 5.48 1.40 NA

9 1998-04-20 00:00:00 4.38 199. 177. 47.6 10.5 31.4 5.54 1.73 NA

10 1998-05-04 00:00:00 3.97 4.23 134. 45.5 10.2 38.6 5.49 1.41 24.6

# ℹ 186 more rowsNote that timeAverage() has a type option to allow for the splitting of variables by a grouping variable. The most common use for type is when data are available for different sites and the averaging needs to be done on a per site basis. That being said, unlike other data utility functions, timeAverage() will warn if there are duplicate dates in the input data frame but still allow the calculation to continue; occasionally averaging across multiple sites may be a legitimate thing to do (e.g., averaging across many low-cost air quality sensors to calculate a more accurate ‘consensus’ time series).

First, retaining by site averages:

# import some data for two sites

dat <- importUKAQ(c("kc1", "my1"), year = 2011:2013)

# annual averages by site

timeAverage(dat, avg.time = "year", type = "site")# A tibble: 6 × 17

site date co nox no2 no o3 so2 pm10 pm2.5

<fct> <dttm> <dbl> <dbl> <dbl> <dbl> <dbl> <dbl> <dbl> <dbl>

1 London Ma… 2011-01-01 00:00:00 0.656 306. 97.2 137. 18.5 6.86 38.4 24.5

2 London Ma… 2012-01-01 00:00:00 0.589 313. 94.0 143. 15.0 8.13 30.8 21.5

3 London Ma… 2013-01-01 00:00:00 0.506 281. 84.7 128. 17.7 5.98 29.1 20.1

4 London N.… 2011-01-01 00:00:00 0.225 53.8 36.1 11.6 39.4 2.06 23.7 16.3

5 London N.… 2012-01-01 00:00:00 0.266 57.4 36.7 13.3 38.5 2.03 20.2 14.6

6 London N.… 2013-01-01 00:00:00 0.250 57.9 36.9 13.7 38.4 2.01 23.1 14.7

# ℹ 7 more variables: v10 <dbl>, v2.5 <dbl>, nv10 <dbl>, nv2.5 <dbl>, ws <dbl>,

# wd <dbl>, air_temp <dbl>Retain site name and site code:

# can also retain site code

timeAverage(dat, avg.time = "year", type = c("site", "code"))# A tibble: 6 × 18

site code date co nox no2 no o3 so2 pm10

<fct> <fct> <dttm> <dbl> <dbl> <dbl> <dbl> <dbl> <dbl> <dbl>

1 London N.… KC1 2011-01-01 00:00:00 0.225 53.8 36.1 11.6 39.4 2.06 23.7

2 London N.… KC1 2012-01-01 00:00:00 0.266 57.4 36.7 13.3 38.5 2.03 20.2

3 London N.… KC1 2013-01-01 00:00:00 0.250 57.9 36.9 13.7 38.4 2.01 23.1

4 London Ma… MY1 2011-01-01 00:00:00 0.656 306. 97.2 137. 18.5 6.86 38.4

5 London Ma… MY1 2012-01-01 00:00:00 0.589 313. 94.0 143. 15.0 8.13 30.8

6 London Ma… MY1 2013-01-01 00:00:00 0.506 281. 84.7 128. 17.7 5.98 29.1

# ℹ 8 more variables: pm2.5 <dbl>, v10 <dbl>, v2.5 <dbl>, nv10 <dbl>,

# nv2.5 <dbl>, ws <dbl>, wd <dbl>, air_temp <dbl>Average all data across sites (drops site and code).

timeAverage(dat, avg.time = "year")# A tibble: 3 × 16

date co nox no2 no o3 so2 pm10 pm2.5 v10

<dttm> <dbl> <dbl> <dbl> <dbl> <dbl> <dbl> <dbl> <dbl> <dbl>

1 2011-01-01 00:00:00 0.439 181. 67.1 74.9 31.5 4.31 31.4 20.5 5.40

2 2012-01-01 00:00:00 0.424 182. 64.7 76.7 26.9 5.08 25.6 18.1 4.23

3 2013-01-01 00:00:00 0.378 169. 60.7 70.7 28.0 3.79 26.8 17.4 4.29

# ℹ 6 more variables: v2.5 <dbl>, nv10 <dbl>, nv2.5 <dbl>, ws <dbl>, wd <dbl>,

# air_temp <dbl>timeAverage() also works the other way in that it can be used to derive higher temporal resolution data e.g. hourly from daily data or 15-minute from hourly data. An example of usage would be the combining of daily mean particle data with hourly meteorological data. There are two ways these two data sets can be combined: either average the meteorological data to daily means or calculate hourly means from the particle data. The timeAverage() function when used to ‘expand’ data in this way will repeat the original values the number of times required to fill the new time scale. In the example below we calculate 15-minute data from hourly data. As it can be seen, the first line is repeated four times and so on.

data15 <- timeAverage(mydata, avg.time = "15 min", fill = TRUE)

head(data15, 20)# A tibble: 20 × 10

date ws wd nox no2 o3 pm10 so2 co pm25

<dttm> <dbl> <int> <int> <int> <int> <int> <dbl> <dbl> <int>

1 1998-01-01 00:00:00 0.6 280 285 39 1 29 4.72 3.37 NA

2 1998-01-01 00:15:00 0.6 280 285 39 1 29 4.72 3.37 NA

3 1998-01-01 00:30:00 0.6 280 285 39 1 29 4.72 3.37 NA

4 1998-01-01 00:45:00 0.6 280 285 39 1 29 4.72 3.37 NA

5 1998-01-01 01:00:00 2.16 230 NA NA NA 37 NA NA NA

6 1998-01-01 01:15:00 2.16 230 NA NA NA 37 NA NA NA

7 1998-01-01 01:30:00 2.16 230 NA NA NA 37 NA NA NA

8 1998-01-01 01:45:00 2.16 230 NA NA NA 37 NA NA NA

9 1998-01-01 02:00:00 2.76 190 NA NA 3 34 6.83 9.60 NA

10 1998-01-01 02:15:00 2.76 190 NA NA 3 34 6.83 9.60 NA

11 1998-01-01 02:30:00 2.76 190 NA NA 3 34 6.83 9.60 NA

12 1998-01-01 02:45:00 2.76 190 NA NA 3 34 6.83 9.60 NA

13 1998-01-01 03:00:00 2.16 170 493 52 3 35 7.66 10.2 NA

14 1998-01-01 03:15:00 2.16 170 493 52 3 35 7.66 10.2 NA

15 1998-01-01 03:30:00 2.16 170 493 52 3 35 7.66 10.2 NA

16 1998-01-01 03:45:00 2.16 170 493 52 3 35 7.66 10.2 NA

17 1998-01-01 04:00:00 2.4 180 468 78 2 34 8.07 8.91 NA

18 1998-01-01 04:15:00 2.4 180 468 78 2 34 8.07 8.91 NA

19 1998-01-01 04:30:00 2.4 180 468 78 2 34 8.07 8.91 NA

20 1998-01-01 04:45:00 2.4 180 468 78 2 34 8.07 8.91 NAThe timePlot() function can apply this function directly to make it very easy to plot data with different averaging times and statistics.

28.5 Calculating percentiles

calcPercentile() makes it straightforward to calculate percentiles for a single pollutant. It can take account of different averaging periods, data capture thresholds — see Section 28.4 for more details.

calcPercentile() shares many of its arguments with timeAverage(), such as type, data.thresh, start.date, and end.date. It also has the argument prefix to define how the columns it creates are labelled.

For example, to calculate the 25, 50, 75 and 95th percentiles of O3 concentration by year:

calcPercentile(

mydata,

pollutant = "o3",

percentile = c(25, 50, 75, 95),

avg.time = "year"

)# A tibble: 8 × 5

date percentile.25 percentile.50 percentile.75 percentile.95

<dttm> <dbl> <dbl> <dbl> <dbl>

1 1998-01-01 00:00:00 2 4 7 16

2 1999-01-01 00:00:00 2 4 9 21

3 2000-01-01 00:00:00 2 4 9 22

4 2001-01-01 00:00:00 2 4 10 24

5 2002-01-01 00:00:00 2 4 10 24

6 2003-01-01 00:00:00 2 4 11 24

7 2004-01-01 00:00:00 2 5 11 23

8 2005-01-01 00:00:00 3 7 16 2828.6 Correlation matrices

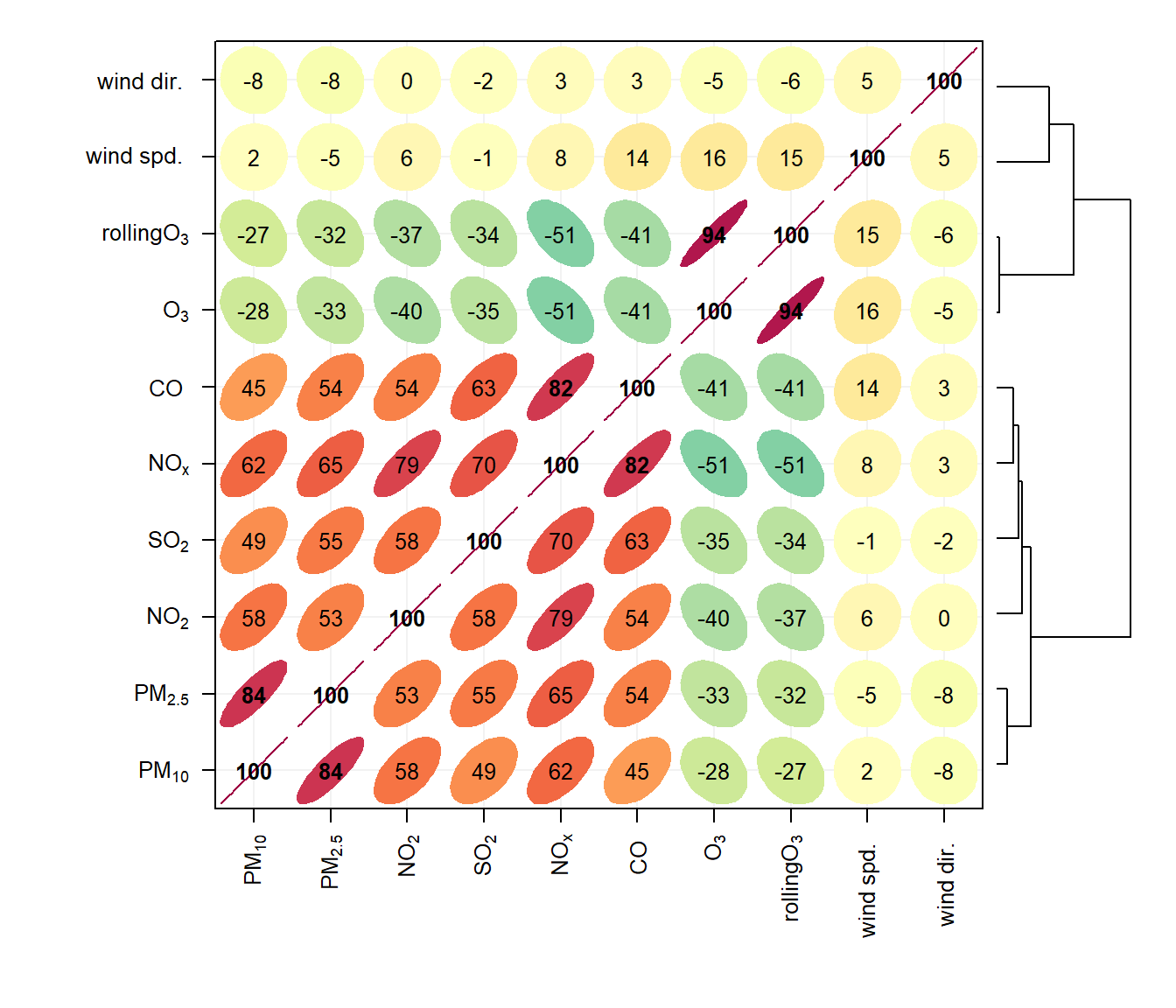

Understanding how different variables are related to one another is always important. However, it can be difficult to easily develop an understanding of the relationships when many different variables are present. One of the useful techniques used is to plot a correlation matrix, which provides the correlation between all pairs of data. The basic idea of a correlation matrix has been extended to help visualise relationships between variables by (Friendly 2002) and (Sarkar 2007).

The corPlot() function shows the correlation coded in three ways: by shape (ellipses), colour and the numeric value. The ellipses can be thought of as visual representations of scatter plot. With a perfect positive correlation a line at 45 degrees positive slope is drawn. For zero correlation the shape becomes a circle — imagine a ‘fuzz’ of points with no relationship between them.

With many variables it can be difficult to see relationships between variables i.e. which variables tend to behave most like one another. For this reason hierarchical clustering is applied to the correlation matrices to group variables that are most similar to one another (if cluster = TRUE.)

An example of the corPlot() function is shown in Figure 28.2. In this Figure it can be seen the highest correlation coefficient is between PM10 and PM2.5 (r = 0. 84) and that the correlations between SO2, NO2 and NOx are also high. O3 has a negative correlation with most pollutants, which is expected due to the reaction between NO and O3. It is not that apparent in Figure 28.2 that the order the variables appear is due to their similarity with one another, through hierarchical cluster analysis. In this case we have chosen to also plot a dendrogram that appears on the right of the plot. Dendrograms provide additional information to help with visualising how groups of variables are related to one another. Note that dendrograms can only be plotted for type = "default" i.e. for a single panel plot.

corPlot(mydata, dendrogram = TRUE)Note also that the corPlot() accepts a type option, so it possible to condition the data in many flexible ways, although this may become difficult to visualise with too many panels. For example:

corPlot(mydata, type = "season")When there are a very large number of variables present, the corPlot() function is a very effective way of quickly gaining an idea of how variables are related. As an example (not plotted) it is useful to consider the hydrocarbons measured at Marylebone Road. There is a lot of information in the hydrocarbon plot (about 40 species), but due to the hierarchical clustering it is possible to see that isoprene, ethane and propane behave differently to most of the other hydrocarbons. This is because they have different (non-vehicle exhaust) origins. Ethane and propane results from natural gas leakage whereas isoprene is biogenic in origin (although some is from vehicle exhaust too). It is also worth considering how the relationships change between the species over the years as hydrocarbon emissions are increasingly controlled, or maybe the difference between summer and winter blends of fuels and so on.

hc <- importUKAQ(site = "my1", year = 2005, hc = TRUE)

## now it is possible to see the hydrocarbons that behave most

## similarly to one another

corPlot(hc)